Repairing an array takes just a couple of SSH commands, and does not require any software to be installed. Setting up an email address to receive raid status changes and failure notifications is similarly easy. Looking into the raid status is as simple as a one line shell command “cat /proc/mdstat”. With software raid we don’t have any of these problems. Because of these hassles, often times drive failures go unnoticed for an extended period of time, making it much more likely to lose an entire raid array and all of the data on it. Finally, if you want to receive notifications about disk status changes or failures, you typically have to go through the same sets of hassles. We found both of these options to be extremely inconvenient for us and our customers. If you want to monitor your raid array or repair a broken array, you typically have two options: 1 is to use the software relevant for the raid card under linux, which in our experience is often difficult to install and unreliable, or 2, you have to reboot the server and do the work in the bios. It’s also more difficult to boot off a hardware raid, so you either need to work around that problem during install time, which is a serious pain, or you need to install your OS onto a separate drive, at added hassle and expense. Every time you update the kernel, the drives connected to the card will disappear from the system until you rebuild driver support into the kernel. You also will need to set up drivers to configure the card, and often times these drivers have to be compiled into the linux kernel. If that card is ever no longer being sold or is temporarily unavailable, you need to do the same thing again with a new card.

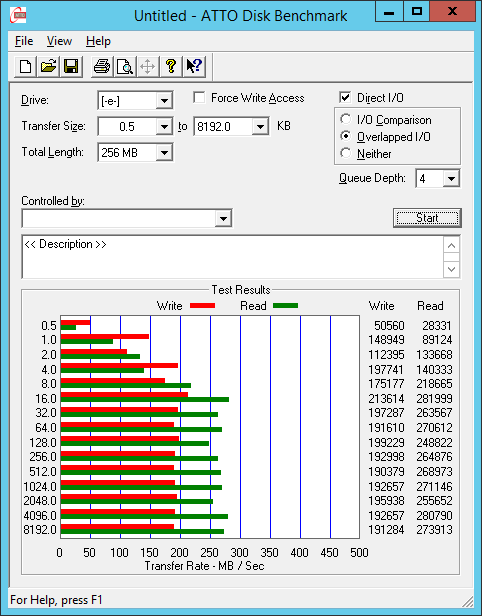

* The third thing we found is that hardware raid can be a real pain in the ass! In order to use hardware raid, you’ve got to first figure out what card you want to be using, and extensively test it’s quality and reliability. By using software raid, you can get the same performance and reliability of hardware raid, while buying dramatically less expensive hard drives. 1) The Raid Edition drives have TLER (a feature required for reliable operation in hardware raid), and 2) The Raid Edition drives cost a LOT more money. What this means is that, instead of using Western Digital Raid Edition drives for example, you can safely use Western Digital Black Edition drives, which are identical except for two key points. What we learned in our research was that software raid arrays do not have this problem, as linux software raid will patiently wait for a drive to finish its error recovery, and will only drop a drive from an array if it has truly failed. Desktop class hard drives will attempt to read the data for an extended period and be dropped from the raid array. Enterprise and raid edition drives are aware of this problem and will reply back to the raid controller that they simply were unable to read the data, and the raid card will rebuild the data from another drive without marking that drive as bad. What we found is that this problem stems from the fact that hardware raid cards will not wait longer than a few seconds for a drive to return data before they mark the drive as bad. We’ve been very concerned about this and researched the issue extensively. * Secondly, I’m sure you’ve heard that you should use enterprise or raid edition drives for your raid arrays, or you risk data loss. This limitation in most hardware raid cards was one strike against it. If your workload consists of reading large files for many simultaneous clients (common for serving videos), we found that a large stripe size can improve the performance of your drives by 4 fold or more. Hardware raid cards default to a low stripe size, and in many cases this cannot be raised to an optimal level. The bigger the stripe size, the better your random i/o performance, especially for larger files, and especially for reads. * First of all, for raid in general, and raid 10 in particular, the stripe size matters to performance… A LOT.

In the process of researching this decision, we learned a few things, some of them unexpected: Judging our options against these benchmarks, we had to decide quite a while ago, whether we should support software raid, hardware raid, or both.

* Solves the actual problem you are having I/O FLOOD believes in efficient, effective technology. What might surprise you however, is that we believe that in nearly all cases, software raid is far better than hardware raid.įirst, to put this in perspective, you have to keep in mind what we believe about technology. I get asked this question a lot: “Do I need hardware raid, or is software raid good enough?” You may have noticed I/O FLOOD only offers software raid, so it will come as no surprise to you that we think software raid is “good enough”.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed